Recently, one of our Managed DBA Service customers asked us to investigate a stored procedure that was timing out, preventing hundreds of users from getting data.

We were given a set of parameters with which to reproduce the problem and when we executed the procedure, it took 1 minute and 44 seconds. However, it was executing in under 2 seconds on previous days and with other parameter combinations.

We isolated the slow performance to a single dynamic SQL statement that was being executed with sp_executesql, pulled out the dynamic SQL string and executed it manually. The statement executed in 2 seconds and the execution plan was completely different to the one generated by the sp_executesql call.

All this information is highly suggestive that parameter sniffing was causing a problem, a common SQL Server DBA scenario.

What is parameter sniffing?

When SQL Server first executes a piece of code, it uses (sniffs) any parameter values to generate an execution plan which is then cached. Creating an execution plan can be expensive so SQL Server tries to re-use them whenever possible.

Problems occur when the distribution of values in the tables is wildly uneven so that a good plan for one set of parameters becomes terrible when re-used for another set.

Parameter sniffing itself isn’t a problem, that’s just how SQL Server works, but we only really talk about it in the context of it causing a problem due to the uneven distribution of values in the underlying data.

Can parameter sniffing affect dynamic SQL?

In short, yes, and here is an example using the AdventureWorks sample database to illustrate this and what you can do about it.

First, create a simple procedure that selects from the SalesOrderDetail table in AdventureWorks. If you don’t have a copy of AdventureWorks you can download it from Microsoft.

create procedure parameter_sniffing_dynamic_sql (@ProductID int) as declare @sqlcmd nvarchar(200); set @sqlcmd = 'select SalesOrderId, SalesOrderDetailID, CarrierTrackingNumber from Sales.SalesOrderDetail where ProductID = @ProductID'; exec sp_executesql @sqlcmd, N'@ProductID INT', @ProductID; go

Now you can execute the procedure and pass through two different parameters:

dbcc freeproccache -- clear all existing plans from cache go exec parameter_sniffing_dynamic_sql @ProductID = 897 go exec parameter_sniffing_dynamic_sql @ProductID = 870 go

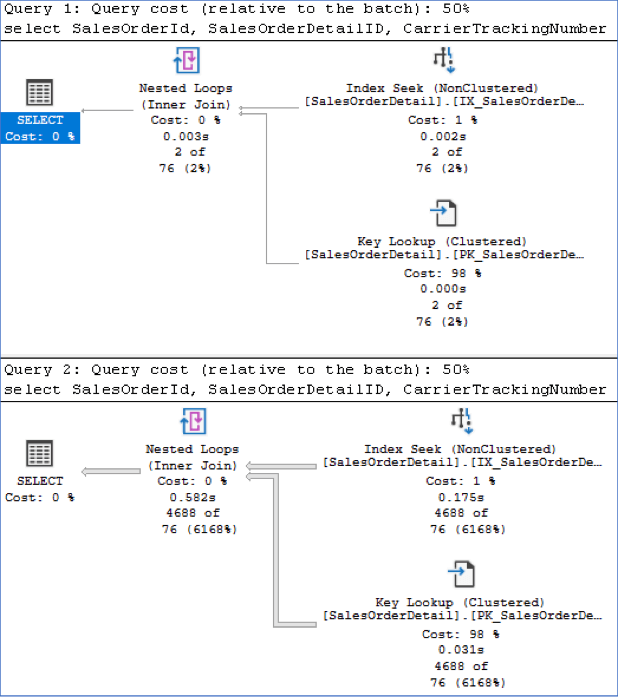

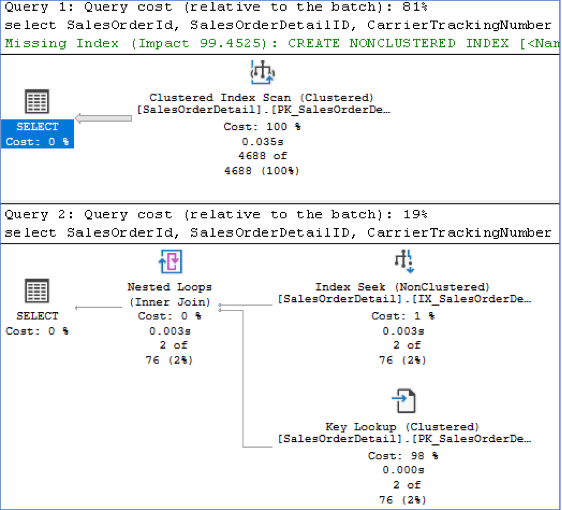

Here is the resulting execution plan for both executions:

Notice that they are identical. The first time the procedure executes, the resulting execution plan is cached, and subsequent executions of the procedure make use of this cached plan.

The first execution took 51ms to execute and performed 12 logical reads.

The second execution took 668ms to execute and performed 21,649 logical reads.

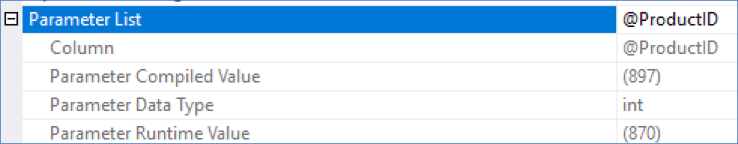

Looking at the Properties of the execution plan (press F4 and click on the SELECT operator in the execution plan for Query 2 above) you can expand the “Parameter List” node to see the values in the image below.

As you can see, the compile value for the parameter is 897 (the procedure we executed first). However, the runtime value is 870.

Now execute the procedures again, but in the reverse the order:

dbcc freeproccache -- clear all existing plans from cache go exec parameter_sniffing_dynamic_sql @ProductID = 870 go exec parameter_sniffing_dynamic_sql @ProductID = 897 go

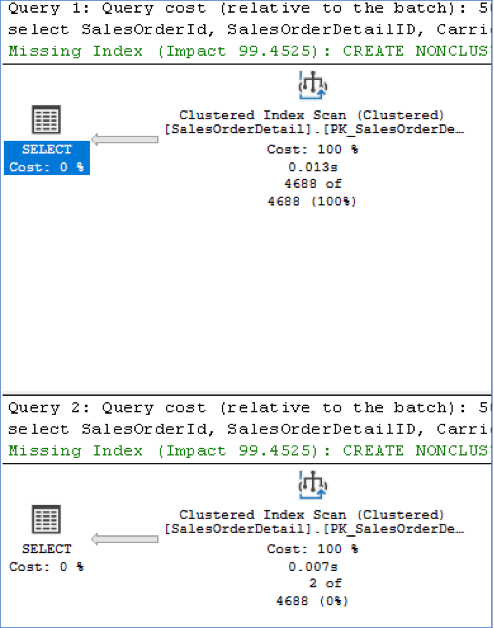

Now you can see that SQL Server’s optimizer has chosen to perform an index scan on the clustered index. In this instance, because ProductID 870 returns 4688 rows, SQL Server has determined that an index scan is more efficient than performing an index seek with a key lookup, and the numbers confirm this. The first execution took 221ms to execute, which is three times faster than when it was using the index seek and key lookup. Additionally, it only performed 1,248 logical reads compared to 21,649.

However, the second execution is now also using an index scan and this has impacted its performance. It now takes 115ms to execute (twice as slow) and performs 1,248 logical reads compared to 12.

Clearly, neither execution plan is optimal for both executions and whilst this example results in little perceived performance difference, on a production environment the difference between an optimal and a suboptimal plan can be minutes as was the case with our customer.

How do you fix a parameter sniffing problem in dynamic SQL?

Dynamic SQL behaves much like T-SQL and in this case, you can use query hints such as OPTION (RECOMPILE) or OPTION (OPTIMIZE FOR [x]).

In the case of our customer, OPTION RECOMPILE worked well. The procedure was already a complex procedure with a CPU time of around 1000+ms and the overhead of recompiling was just 4ms. Given that the procedure was not executing frequently, this made the decision easier.

Coming back to our example, you can could use OPTION RECOMPILE within the dynamic T-SQL:

create procedure parameter_sniffing_dynamic_sql (@ProductID int) as

declare @sqlcmd nvarchar(200);

set @sqlcmd = 'select SalesOrderId, SalesOrderDetailID, CarrierTrackingNumber

from Sales.SalesOrderDetail

where ProductID = @ProductID OPTION(RECOMPILE)';

exec sp_executesql @sqlcmd, N'@ProductID INT', @ProductID;

go

Now when you execute with both sets of parameters you’ll get a good plan for each execution:

The first execution uses an index scan and the second one uses a seek and key lookup.

Note that it is possible to execute the sp_executesql procedure WITH RECOMPILE:

exec sp_executesql @sqlcmd, N'@ProductID INT', @ProductID WITH RECOMPILE;

However this recompiles the sp_executesql procedure itself, not your code, so you have to use it at the statement level as shown above.

Final thoughts

Recompiling a stored procedure or a statement is no substitute for properly tuning your queries. In the above examples, you will note that optimizer is recommending an index on ProductID that INCLUDES the CarrierTrackingNumber column. If you were to create this index then the statement would be even more optimal because SQL Server could use an index seek without the need for key lookup. In this example, parameter sniffing would not cause a problem and both executions would execute faster.

With the index applied, the statements perform 3 logical reads and execute in under 50ms so whilst it is tempting to just recompile, make sure you properly tune the statements and indexes first.

If you have to recompile, choose the smallest unit of code to recompile. In the examples above, one could argue that we might as well have recompiled the whole stored procedure. However, a stored procedure often consists of many statements and many lines of code and recompiling an entire stored procedure can be quite expensive.

Try and stick to individual statements that you have identified as been susceptible to a parameter sniffing problem – and only when you are unable to tune the queries. Along with this line of thinking, rather than recompiling, try OPTION OPTIMZE FOR, which will allow you to be even more targeted.

Should I put option recompile on all my dynamic SQL statements?

This can be really tempting – after all, we just made a procedure execute in 1 min 42 seconds faster just by using OPTION RECOMPILE, so why not do it for everything?

Because often recompiling can result in worse performance.

Caching of execution plans is there to help speed things up and is generally a really good thing. Parameter sniffing is just how SQL Server works so it’s happening all the time and largely goes unnoticed.

However, for those rare occasions where you have identified parameter sniffing as causing a problem and you have evaluated the impact of using OPTION RECOMPILE in that instance, it can certainly save the day. Like any other tool, it is a valuable one to have in the toolbox – just not the only one.